Calm Coding: The Workflow That Makes Vibecoding Survivable

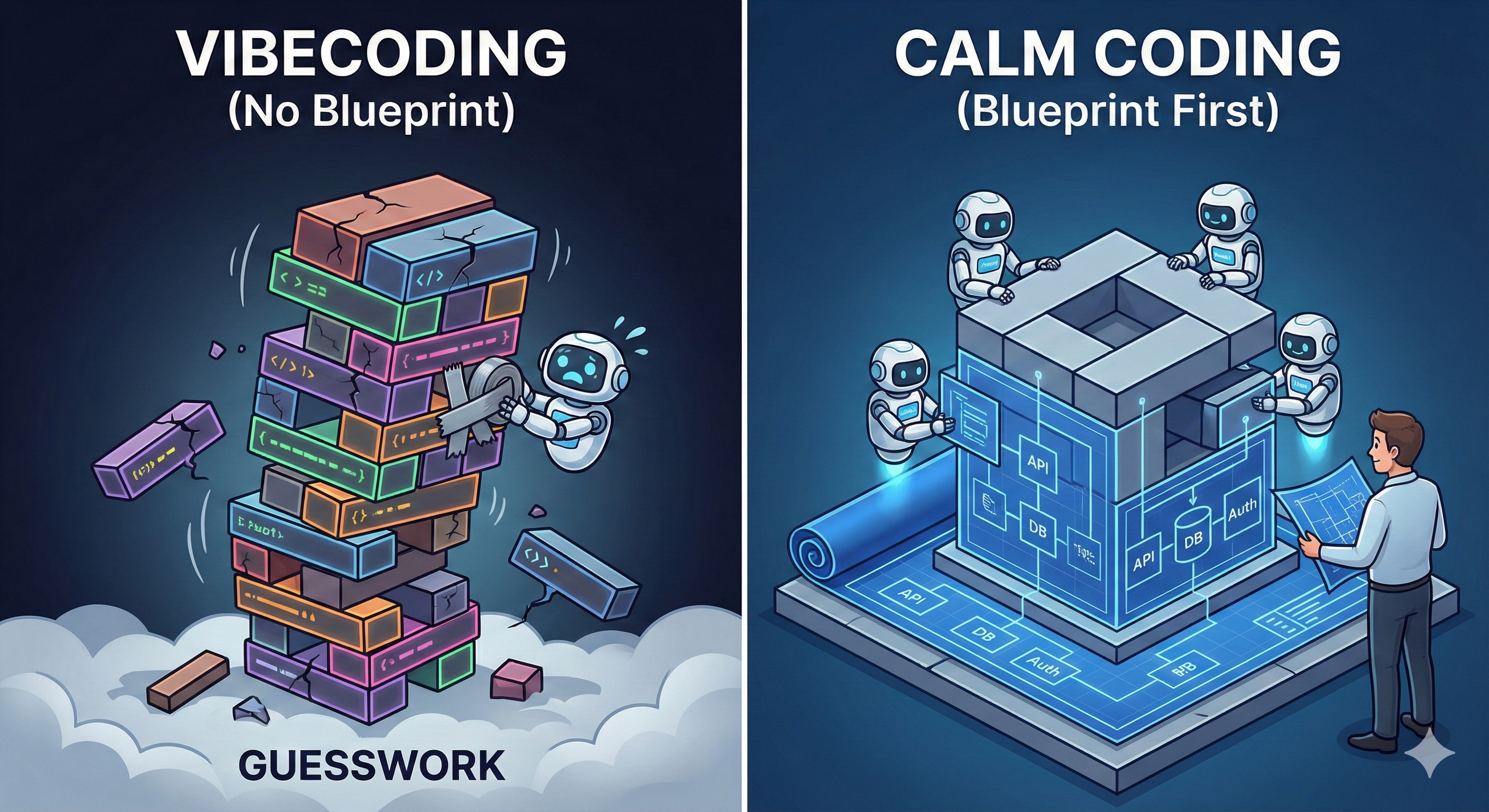

You just asked Claude to build a tool. It built something. You launched it. You asked it to fix a bug. It fixed three things and broke two others. You told it to switch the backend to Python. It did. Nothing works now. You keep iterating until it kind of works.

Congratulations. You vibecoded yourself into a corner.

I've watched this happen dozens of times. Startups. Internal tools. Funded teams. Solo founders. Same pattern every time. And here's the part that makes me genuinely angry: when they come to me to untangle the mess, I tell them to start over and define their requirements first. Every. Single. Time. They ignore it. Every. Single. Time.

But ignoring specs is survivable. What comes next isn't.

Then someone tries to ship the vibecoded demo as the base of a production codebase. And that's when the real damage starts.

I've been called into vibecoded codebases by founders who'd already spent months shipping. The fastest fix in most of them? Delete everything. Start over.

Why Smart People Do This

Vibecoding feels productive. The dopamine hit is real. That's the trap.

But velocity without direction isn't speed. It's drift.

The LLM doesn't know what you need. It guesses. Confidently. You ship the guesses. The next prompt patches over the guesses.

When cornered, it pivots. Suddenly you have a stubbed API in your live product.

Or you ship the vibecoded MVP to real users. It works. For a week. Then you hit 10K users and the whole thing collapses. No one thought about scale because no one wrote it down.

Three weeks later, someone — often me — gets the call. "Can you help us fix this?" The codebase is a Frankenstein of five different architectural decisions. The fastest fix? Delete it and start over. The answer they never want to hear.

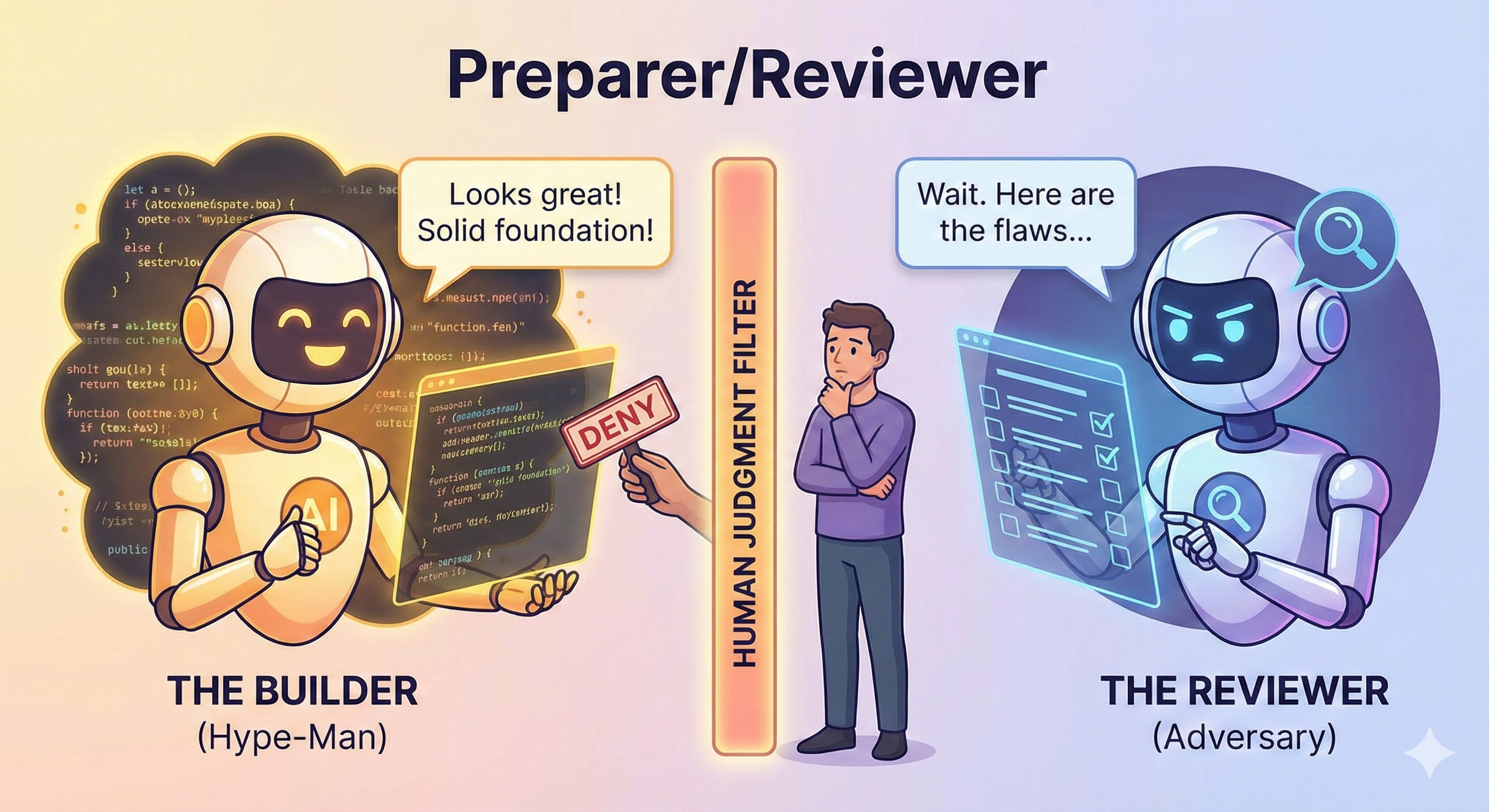

The Hype-Man Problem

LLMs are hype-men. You show them a broken architecture and they tell you it's "a solid foundation." You ask them to review code they just wrote and they tell you it "looks great."

I use this phrase a lot: "enables people to make dumb decisions with high conviction."

The fix is structural. A different LLM, different prompt, different context. Trained to disagree. Tasked to find the holes. If you only talk to a hype-man, you'll never hear "wait, that's a terrible idea."

Calm Coding: The Workflow

Calm Coding is governance for the AI-powered development lifecycle.

Two weeks of human-team coding. AI agents, each with a defined role and clear ownership. Two days. Maybe less.

The workflow isn't the overhead. The workflow is what makes the speed safe.

And this is just the beginning. AI agents are getting better at the parts we don't want them to touch yet — like discovery.

One important thing before we start. The tools in this post will age badly. Fast. Prompt tricks. Claude.md files. Agent coordination patterns. All of that is the fast-moving 20%. This workflow is the slow-moving 80%. The names of the tools will change. The models will change. The capabilities will change. The need for this structure won't.

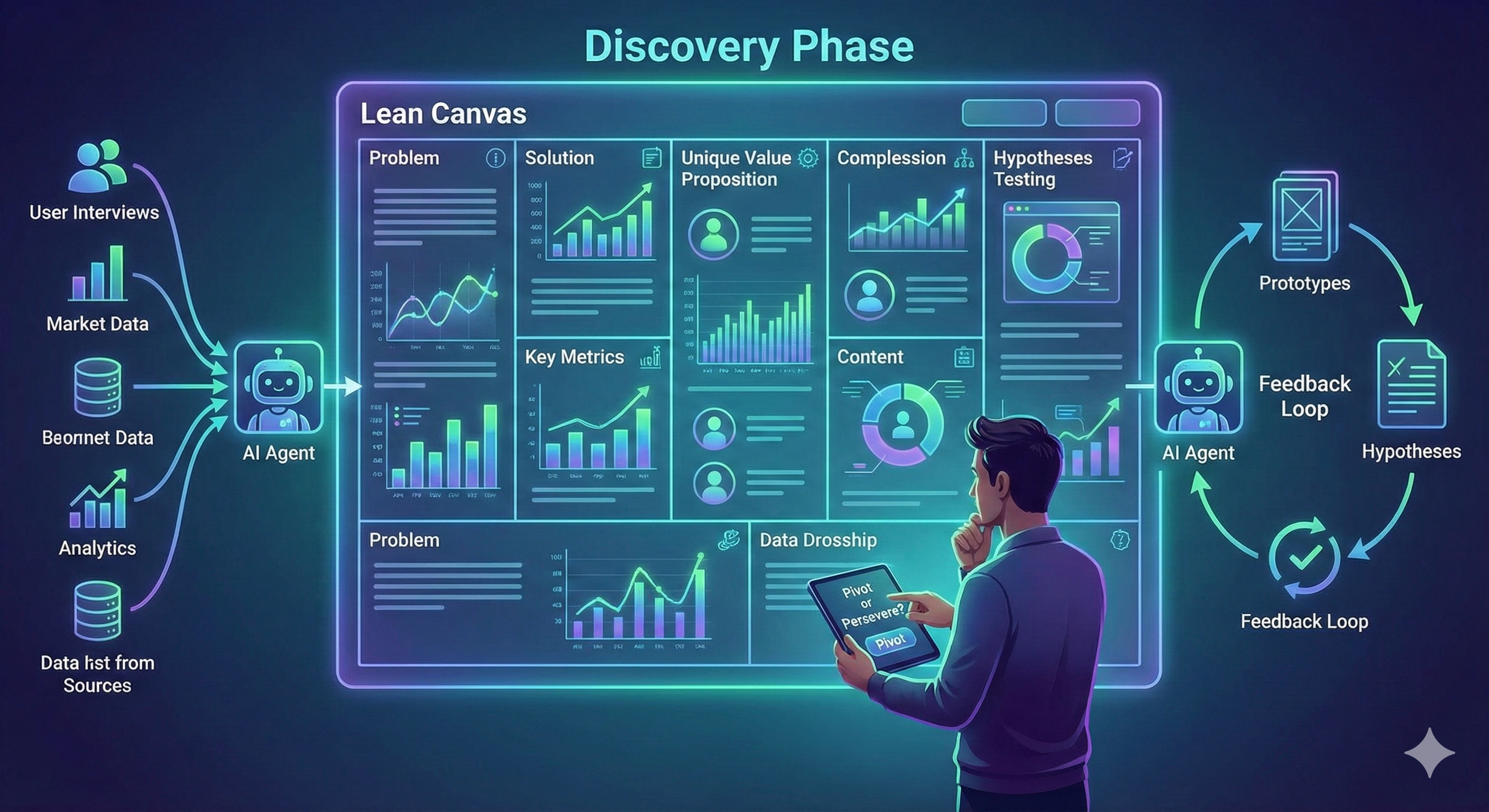

Step 0: Discovery. Before You Build Anything.

Lean Canvas. Problem. Users. Value prop. Journey. Test the hypotheses before you test code.

Here's how I know a codebase is unsalvageable before I read a line of code. I look for .md files. Spec. Architecture. Decision log. Missing or half-finished? Spaghetti-descriptions? I already know the answer.

Start over. You can't debug what was never designed.

Step 0 is the load-bearing wall. Get it wrong, everything downstream is built on a guess.

Should you let an AI run discovery unsupervised? Not yet. But watch this space. Someone gave an agent a budget and a brief. Went to sleep. Woke up to mockups, real user feedback, and a pivot — all executed autonomously overnight. The app it built in the morning was better than the one it started with. It works. Already.

Step 1: Specs. The Most Crucial Step You'll Be Tempted to Skip.

Before a single line of code. You answer these. In writing.

Why are we building this? Skip this and you're building a solution to a problem nobody has.

What is this, actually? Demo. MVP. Production. Three different engineering targets. Treating them the same is how you end up shipping a vibecoded demo to prod.

What's the target hardware and scale? Get this wrong and your architecture is a lie from day one.

Previous iterations? What broke. What users actually complained about. Skip this and your LLM repeats every mistake the last version made.

Regulatory reality check. HIPAA? PCI-DSS? GDPR? SOC 2? "I'm not sure" — that's your red flag. This determines your entire architecture. Getting it wrong costs you the company.

You want your LLM to guess and hallucinate the most crucial step?

Step 2: Research Before You Architect

Stop. Research first.

Most people skip this entirely. Specs straight to architecture = LLM designing on a training cutoff. Already stale.

The move: your problem — not your specs — into a research tool. Perplexity works. The prompt is adversarial:

Here's what I'm trying to solve. Where am I wrong? What are the common gotchas

in this space? What's the latest research or tooling I should know about before

I start architecting? What else did I miss?This is adversarial review. Same principle Anthropic uses for responsible scaling. You're not asking for validation. You're asking to be challenged.

This is where you find out the blog-famous approach has a scaling problem. Or that your specs missed a regulatory edge case.

Something changes? Update the specs. Re-run. Proceed. It's a loop — but an intentional one. Exit condition: nothing new changes the architecture. The vibecoding loop has no exit condition. It just keeps going until someone calls me.

Step 3: Architecture Before Code. Period.

Specs done. Research done. Reviewed. Signed off. Now: architecture.

Not code. Not a prototype. Architecture. The LLM asks. You answer. Not the other way around.

"Read these specs. Ask me clarifying questions before we draft anything. Don't assume. I prefer AWS, Python, React, Tailwind CSS, Postgres + pgVector but I am flexible. Query me on anything that conflicts or needs a decision."

The "query me" instruction surfaces the decisions that actually matter. Wrong assumption here costs a rewrite, not an hour.

"10K concurrent users. Bursty or steady-state?" — Changes whether you need auto-scaling, a queue, or both.

"pgVector for the demo. Core production feature or MVP-only?" — Locks or unlocks your production data architecture.

"No mention of auth. Internal-only or end users?" — Changes your entire security model.

One caveat: I used Grok for architecture planning — it outperformed the others. But don't build loyalty to a model. The discipline is permanent. The tools are ephemeral.

Step 4: Code Like You're Running a Team. Because You Are.

Now you build. This is where vibecoding tutorials fall apart. They treat "the LLM" as one thing. One agent. One conversation. One monolithic "hey, build me the whole app."

Don't do that. A monolithic prompt to a single agent produces a monolithic codebase that nobody — human or AI — can reason about.

You have a team now. Act like it.

But not the team you're used to. These are junior specialists. Fast. Capable. Zero context memory between sessions. Every prompt is day one for them. The structure is what holds them together.

Architect. Designs the system. Asks before it builds.

Reviewer. Challenges assumptions. Finds the holes.

Tester. One job: break it.

Microsoft calls this multi-agent QA. Same idea. Different name.

Delegation. But Not the Way You Think.

Each agent gets a role. You are the lead API coder. You own the test data layer. You are the frontend engineer. Scoped. Clear.

Here's where I break from conventional wisdom: every agent gets full project context. Specs, architecture, everything. An agent that only sees its slice can't flag cross-layer conflicts. The boundary: "You own X. Full visibility into everything else. Don't touch it."

The Sequence. It Matters.

API first. The contract. Everything else talks to this. Nothing gets built until this is reviewed.

Test data second. Realistic. Representative. Not five rows of lorem ipsum. Shape it to match your specs.

Test cases third. Against the API contract. Before any frontend or backend logic. Different agent writes tests for code a different agent is about to write. Tests fail day one? You find out before you've built on broken assumptions.

Frontend and backend in parallel. Same contract. Same test data. Same test cases. They can't drift.

Real data replaces test data last. Not first. Not "let's just hook it up and see what happens." Last.

The Parts No One Wants to Do

Someone always has to do the weird, unglamorous work. That's true for human teams. It's true for AI teams.

LLMs own the well-defined stuff. API scaffolding. CRUD. Standard components. Boilerplate tests. Hand them everything textbook.

The non-textbook problems are yours.

I built a home media automation system. Agents handled everything standard. Deep links were broken. No SDK. No docs. The fix: simulate remote key presses programmatically. Precise timing. Specific sequences. Dropped inputs handled manually.

That part I coded myself. The agents would have generated something. A confident guess. A hallucination. No prompt replicates debugging intuition or hardware-level timing.

The rule: if it's well-documented and well-understood, delegate it. If it requires judgment, taste, or real debugging — own it.

Step 5: Testing. But Not The Way The TDD Purists Want.

The TDD vs. "test after" debate is 20 years old. Wrong question. The right question: how stable is your contract?

Spec locked and reviewed? TDD is almost free. Agent generates tests from the spec in minutes. And those tests become something more important than coverage: they become your coordination layer. Multiple agents. Same test suite. No drift without a failure.

Still in demo territory? Spec might shift? Code first. Test after. But test before you show it to anyone. Well-tested wrongness is still wrongness. TDD against an unvalidated spec just makes it more expensive to undo.

Who Runs the Tests Isn't Random

The agent that wrote the code does not run the tests. The agent that wrote the tests does not analyze coverage. Different agent. Different context. Different prompt.

The agent that wrote the code is compromised. It knows what it built. It'll rationalize. It'll make tests pass that don't prove anything. That's not an AI problem. That's a conflict of interest. Same reason you don't audit your own books.

Dedicated testing agent. One job: break it. Find the gaps. Not "this looks solid" — that's the hype-man. "Here's what's untested. Here's why it matters. Here's what I'd hit next."

Write at least three tests per feature that would make an agent uncomfortable.

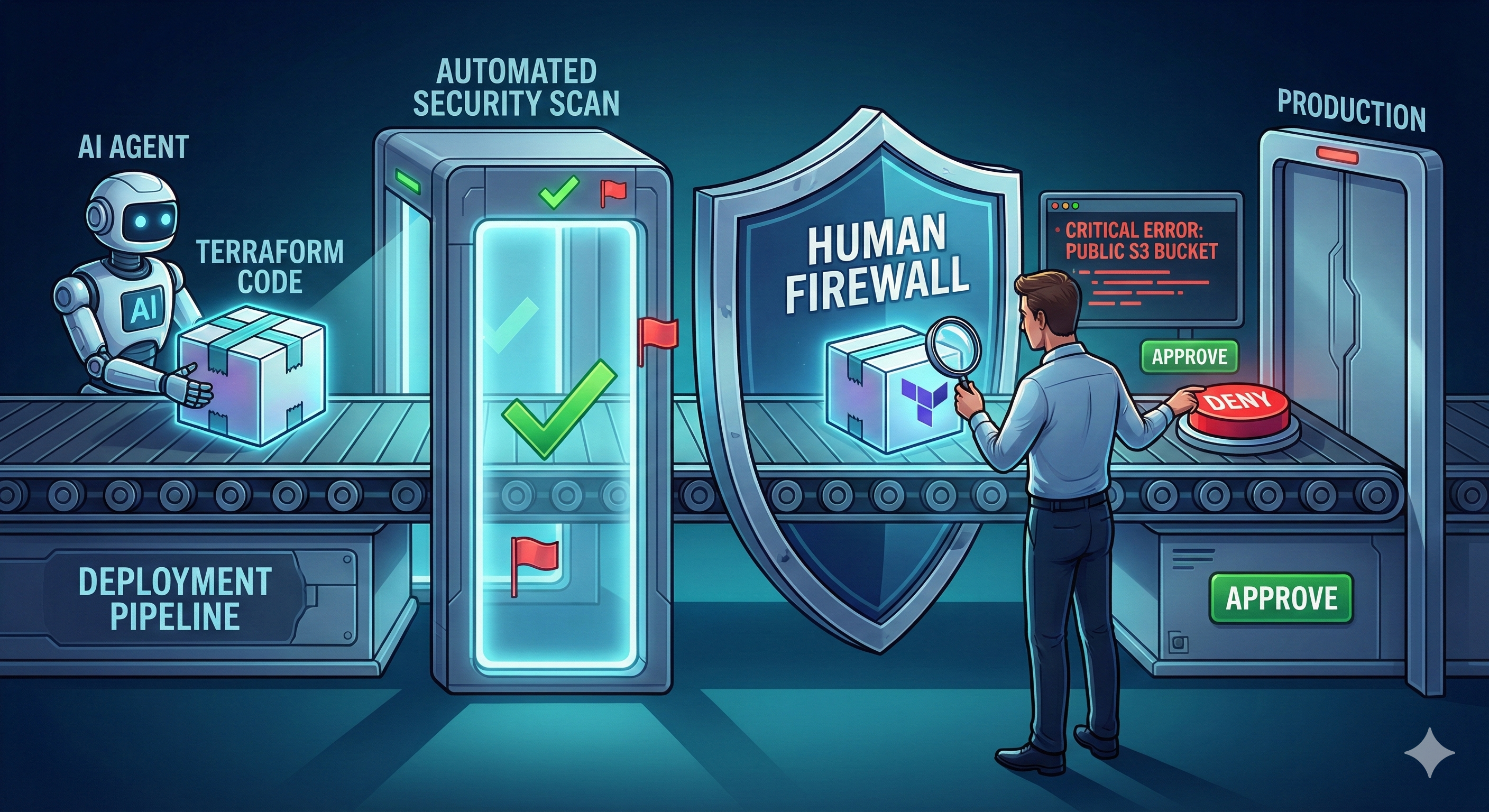

Step 6: Deploy. But Know What You're Actually Deploying.

This is where demo/MVP/production stops being philosophical. Starts costing real money.

Demo? Amplify. Test data. Ship it. 15 minutes. Goal: works in a browser for a pitch. Security posture: good enough. It dies after the meeting.

Production? Everything changes. Deployment doesn't figure out your infrastructure. It executes a plan that's already been reviewed.

Why This Step Is Different

Agents generate perfectly reasonable-looking Terraform. But reasonable isn't deterministic. That's the problem. Reasonable-looking = public S3 bucket. Overprivileged IAM. Wide-open security group. Each one looks fine. Together, they're an invitation.

I had a desk neighbor in my first coworking space in SF. One of the nicest people in the room. Someone got into their AWS account. Spun up Bitcoin mining. Burned through their credit card in days. One permission slightly too broad. That's it.

Not an edge case. The expected outcome when you skip the review. Not if. When.

What This Actually Looks Like In Practice

Agent: fast, thorough, tireless. Generates code. Scans for known misconfigs. Human: reads a permission policy and feels something is off before the scanner flags it.

(That split — not "human supervises AI" but "each side owns different strengths" — is worth its own post. It's coming.)

Second agent runs security analysis. One of the few steps where scanning tools — not just another LLM — belong. Then you review every line before it touches a real account.

Production deployment review checklist:

- Every IAM policy and role

- Every security group rule

- Every S3 bucket ACL

- Every environment variable

- Remote state and state locking configured

- No hardcoded credentials anywhere in the stack

Don't Forget: Terraform State Is Not Optional

Agents will skip state management if you don't explicitly call it out. Remote state. State locking. Backend configuration. Define it in Step 3 or stop before you generate a single .tf file. A missing state lock quietly corrupts your infrastructure — and no agent flags it unless you tell it to look. Infrastructure without reproducible state isn't infrastructure. It's archaeology.

Where This Is All Going

Right now, AI makes coding fast. Calm Coding is what makes it survivable.

Step 0 is about to get automated. Real user tests. Real feedback. Real pivot decisions — overnight. That's not coming. It's here.

Skip the discipline, and you'll be deleting your repo in three weeks. I've seen it. Dozens of times.

Published February 2026